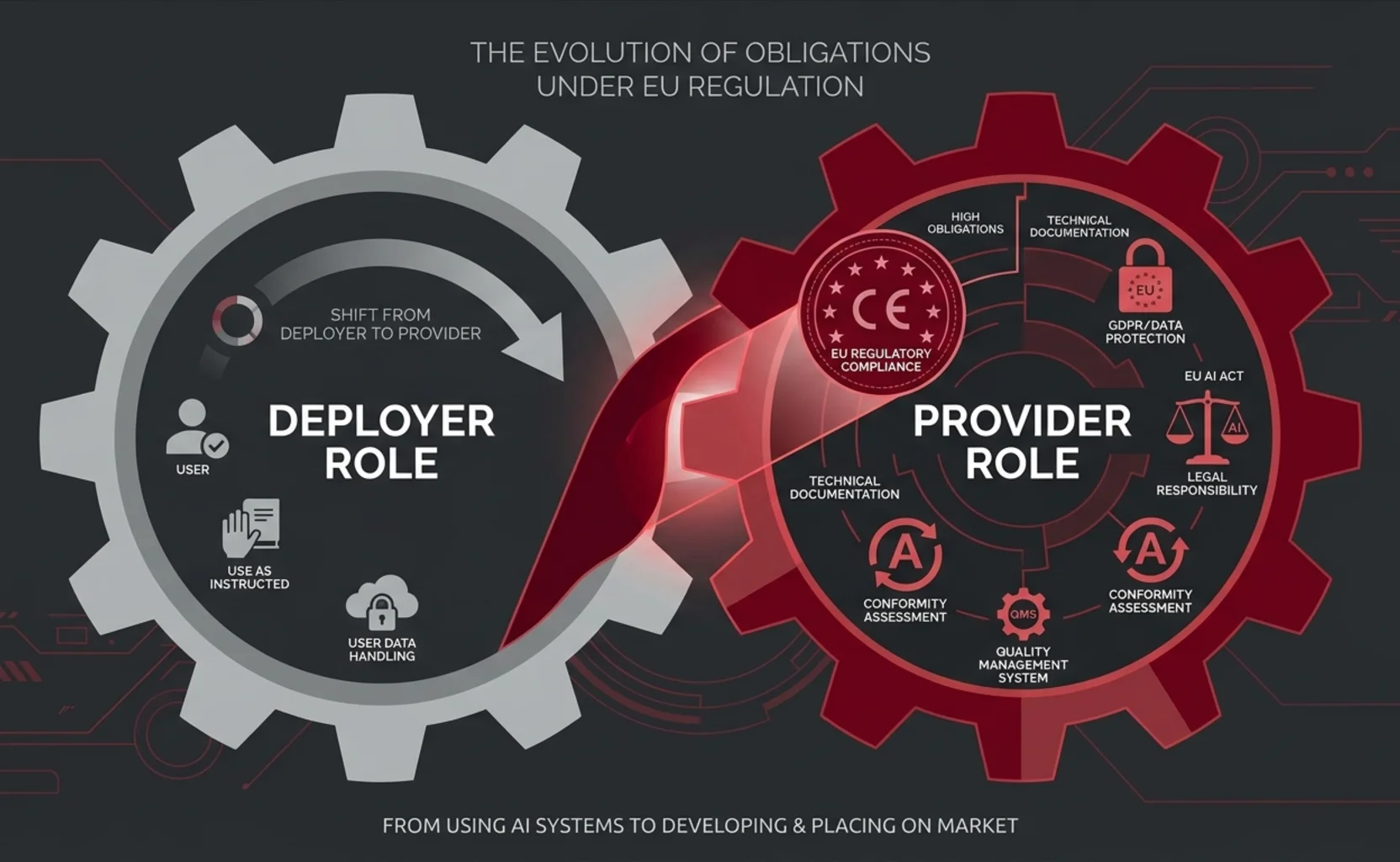

In our previous analysis, we examined who qualifies as a deployer under the AI Act, what obligations actually apply, and what the European Commission has clarified regarding AI agents. The picture, however, would be incomplete without addressing the other side of the equation: the circumstances under which an organization that uses an AI agent may find itself reclassified as a provider — with a fundamentally different set of obligations.

That is not a theoretical scenario. As AI agents are increasingly customized, integrated into business workflows, and redistributed under new brands, the boundary between deploying and providing becomes operationally critical. The AI Act addresses this through a precise mechanism: Article 25, which establishes the conditions under which a deployer is treated as a provider.

We refer to this situation as the “involuntary provider” — not as a normative category, but as a descriptive one. It captures the practical reality of organizations that assume provider obligations without having intended to, and often without being aware of it.

The three doors to reclassification under Article 25

Article 25(1) of Regulation (EU) 2024/1689 identifies three scenarios in which an entity other than the original provider is treated as a provider of a high-risk AI system and assumes the provider obligations relating to high-risk AI systems, including compliance with the requirements of Chapter III and the relevant conformity procedures:

(a) Branding. The entity places its own name or trademark on a high-risk AI system already placed on the market or put into service. That is the most straightforward trigger: if you present the system as your own, the AI Act treats you as the provider — regardless of whether you developed or modified it.

(b) Substantial modification. The entity makes a substantial modification to a high-risk AI system in such a way that it remains a high-risk system. Article 3(23) defines substantial modification as a change to the AI system after its placing on the market or putting into service, which is not foreseen or planned by the provider and as a result of which the compliance of the system with the applicable requirements is affected, or the intended purpose for which the system has been assessed is modified.

(c) Change of intended purpose. The entity modifies the intended purpose of an AI system — including a general-purpose AI system — that was not classified as high-risk, in such a way that the system becomes a high-risk system under Annex III.

It is essential to note that this mechanism operates exclusively in the domain of high-risk AI systems. A deployer who customizes a minimal-risk or limited-risk system does not trigger Article 25 reclassification — although other obligations (e.g., transparency under Article 50, or GPAI-related obligations under Articles 51–56) may still apply.

Where organizations get it wrong

The reclassification mechanism under Article 25 is precise, but its practical implications are often misunderstood. The following scenarios illustrate where organizations may inadvertently cross the line from deployer to provider when working with AI agents. Each scenario is analyzed against the applicable legal provisions.

Fine-tuning and retraining

An organization that fine-tunes an AI agent on proprietary data to alter its behavior in a domain-specific context may be performing a substantial modification within the meaning of Article 3(23). The critical question is not whether fine-tuning occurred, but whether it affects the system’s compliance with the applicable requirements or modifies its intended purpose. Light parameter adjustments within the boundaries foreseen by the original provider are generally not substantial modifications. Significant retraining that changes the system’s outputs, risk profile, or domain of application may well be.

Orchestration of multi-agent systems

When an organization deploys multiple AI agents in a coordinated workflow — where the output of one agent serves as the input for another, and the overall system behavior is determined by the orchestration logic rather than by any single agent — the question arises whether the composite system constitutes a new AI system distinct from its components.

That is an interpretive question that the AI Act does not address explicitly. In my reading, the answer depends on the specific circumstances: who exercises design control over the composite architecture, whether the resulting system has a distinct intended purpose from that of its individual components, and whether its overall behaviour — including emergent properties arising from the interaction of agents — gives rise to a risk profile that the original providers did not foresee or assess.

If these conditions are met and the orchestrated system operates in a high-risk domain (e.g., credit assessment, recruitment, clinical decision support), the organization that designs and controls the orchestration could, in principle, be treated as the provider of that composite system. That remains, however, a case-by-case assessment that requires careful analysis of the system’s architecture, governance, and intended purpose.

White-labelling and rebranding

That is the most straightforward scenario. Article 25(1)(a) is explicit: placing one’s own name or trademark on a high-risk AI system already on the market triggers reclassification. An organization that licenses an AI agent, rebrands it under its own name, and offers it to its clients is, for the AI Act, the provider of that system, with all corresponding obligations.

Sector-specific adaptation

An organization that takes a general-purpose AI agent and adapts it for use in a specific sector listed in Annex III — such as healthcare, education, employment, law enforcement, or access to essential services — may be modifying its intended purpose in a way that brings it within the high-risk classification. That triggers Article 25(1)(c): the system was not classified as high-risk, but its new intended purpose makes it so.

Integration into proprietary products or services

When an AI agent is embedded into a product or service and offered to third parties, the organization that controls the integration determines how the agent interacts with users, what data it processes, and what actions it can take — may be the entity that defines the intended purpose of the resulting system. If that intended purpose falls within Annex III, the organization is the provider.

The open-source question

A common misunderstanding deserves specific attention. The AI Act provides for a differentiated regime for open-source AI, but this does not amount to a blanket exemption. It is important to distinguish between two separate regimes.

Open-source AI systems. Article 2(12) exempts AI systems released under free and open-source licenses from certain obligations — unless the system is a high-risk system (Annex III) or a prohibited system (Article 5). In other words, the open-source exemption does not apply to high-risk systems. An organization that takes an open-source AI agent framework, builds on it, and deploys the resulting system in a high-risk context cannot rely on this exemption. It may well be a provider under Article 25.

Open-source GPAI models. A separate and distinct regime applies to general-purpose AI models. Article 53(2) provides a partial exemption from certain transparency obligations for providers of GPAI models released under open-source licenses — but this exemption falls away entirely if the model is classified as having systemic risk under Article 51. That is a different legal plane from Article 25 reclassification. Still, it is relevant for organizations building AI agents on open-source foundation models: the partial exemption for the underlying model does not shield the downstream system from high-risk obligations.

An important caveat

Not every advanced use of an AI agent triggers reclassification. The AI Act’s mechanism is precise and conditional. Customization that remains within the parameters foreseen and documented by the original provider, configuration of settings explicitly offered as deployer options, and use of the system for its stated intended purpose — all of these remain within the deployer’s domain.

The risk lies not in using AI agents, but in modifying them—or their context of use—beyond the boundaries the original provider anticipated, without recognizing the legal consequences.

Decision table: deployer or provider?

The following table maps common operational scenarios to their likely legal qualification under the AI Act. It is intended as a practical orientation tool — not as a substitute for case-by-case legal analysis, which must account for the specific characteristics of the system, the nature of the modification, and the intended purpose.

| # | Scenario | Likely qualification | Legal reference |

|---|---|---|---|

| 1 | Use of the AI agent as-is, without any modification, for its intended purpose | Deployer | Art. 3(4) |

| 2 | Configuration of parameters within the options documented and foreseen by the provider | Deployer | Art. 3(4); Art. 25 a contrario |

| 3 | Light fine-tuning on proprietary data without altering the system's core functionality or risk profile | Grey area — case-by-case assessment required | Art. 3(23); Art. 25(1)(b) |

| 4 | Significant retraining or fine-tuning that changes the system's behavior, outputs, or risk profile | Probable reclassification as provider | Art. 3(23); Art. 25(1)(b) |

| 5 | Distribution of the AI agent under the organization's own name or trademark | Provider | Art. 25(1)(a) |

| 6 | Adaptation of a general-purpose agent for use in an Annex III sector (e.g., healthcare, recruitment, education) | Provider | Art. 25(1)(c) |

| 7 | Orchestration of multiple agents into a composite multi-step system operating in a high-risk domain | Grey area — depends on design control, distinct intended purpose, and whether the composite system constitutes a new AI system | Art. 3(1); Art. 3(23); Art. 25(1)(b)/(c) |

| 8 | Use of an open-source AI agent framework in a high-risk context (Annex III) | Probable provider — no automatic open-source exemption for high-risk systems | Art. 2(12); Art. 25 |

This table is intended as a general orientation tool. It does not replace case-by-case legal qualification, which depends on the system’s actual characteristics, the nature of the modification, and the specific intended purpose.

What changes when reclassification occurs: provider obligations checklist

When reclassification is triggered under Article 25, the organization assumes the full set of provider obligations for high-risk AI systems established in Chapter III, Section 2 of the AI Act (Articles 9–22). The following checklist summarises the key requirements.

| # | Obligation | What it entails | Legal ref. |

|---|---|---|---|

| 1 | Risk management system | Establish and maintain a continuous, iterative risk management process throughout the entire lifecycle of the system | Art. 9 |

| 2 | Data governance | Ensure training, validation, and testing data meet quality criteria: relevance, representativeness, accuracy, completeness | Art. 10 |

| 3 | Technical documentation | Draw up and keep up to date technical documentation demonstrating compliance, before the system is placed on the market | Art. 11 |

| 4 | Record-keeping (logging) | Design the system to enable automatic recording of events relevant for traceability | Art. 12 |

| 5 | Transparency and information | Ensure the system is designed to allow deployers to interpret outputs and use the system appropriately; provide instructions for use | Art. 13 |

| 6 | Human oversight | Design the system so that it can be effectively overseen by natural persons during the period of use | Art. 14 |

| 7 | Accuracy, robustness and cybersecurity | Ensure appropriate levels of accuracy, robustness, and protection against security threats throughout the lifecycle | Art. 15 |

| 8 | Quality management system | Implement a documented quality management system covering policies, procedures, compliance strategies, data management, record-keeping, and resource management | Art. 17 |

| 9 | Conformity assessment | Carry out the applicable conformity assessment procedure before placing the system on the market or putting it into service | Art. 43 |

| 10 | EU database registration | Register the high-risk AI system in the EU database before placing it on the market or putting it into service | Art. 49 |

| 11 | Post-market monitoring | Establish and document a proportionate post-market monitoring system to collect and analyze data on the system's performance throughout its lifecycle | Art. 72 |

The gap between the deployer’s and the provider’s obligations is substantial. A deployer of a high-risk system must ensure human oversight, monitor the system, retain logs, and — where applicable — conduct a FRIA. A provider must do all of that and, in addition, build the system to meet all essential requirements from the outset, establish a quality management system, produce comprehensive technical documentation, carry out a conformity assessment, and set up a post-market monitoring plan. That is a qualitative shift — from operational due diligence to full product-safety responsibility.

First 5 actions if a risk of reclassification as a provider emerges

2. Assess whether the modification is substantial within the meaning of Art. 3(23) — i.e., whether it affects compliance or alters the intended purpose.

3. Verify whether the scenario falls under Art. 25(1)(a), (b) or (c).

4. Suspend any placing on the market, rebranding, or further distribution until a gap analysis has been completed.

5. Initiate an assessment of technical documentation, risk management, and conformity requirements (Arts. 9–17, 43).

The real risk: legal misclassification

The most insidious risk for organizations working with AI agents is not technical but legal: a failure to correctly identify one’s own role in the AI Act value chain. An organization that believes itself to be a deployer but is, in fact, a provider bears the wrong obligations—or none at all—and is exposed to enforcement action and liability without the corresponding compliance infrastructure.

This risk is amplified in the AI agents context by several factors: the modular architecture of agentic systems, which may obscure the boundary between using and providing; the ease of customisation, which can lead to modifications that cross the Article 25 threshold without a deliberate decision to do so; the open-source ecosystem, where the absence of licensing costs may create a false sense of regulatory freedom; and the multi-party chains typical of AI agent deployments, where responsibilities are distributed across multiple actors and the provider role may not map neatly to any single entity.

For the analytical framework on how the AI Act constructs its system of subject roles and legal positions, see my paper on subject roles in the AI Act. For the compliance architecture that providers of AI agents must implement once reclassification has occurred, see Nannini et al., AI Agents Under EU Law (arXiv:2604.04604).

Resources

Operational hubs

The blog’s Hubs provide interactive decision-support tools — not articles, but operational resources to identify your role, map your obligations, and act in time:

- AI Hub — Regulatory and operational guidance — the AI Act from a legal perspective: find your role, classify your system, see your obligations

- AI Act Hub — Operator roles and obligations — map of the six AI Act operators, role transformation mechanisms, obligation cascade

- GDPR & AI Hub — Operational intersections — legal bases for data processing in AI systems, automated decisions, DPIA, profiling

Tools and resources

- AI Act — GDPR Database — interactive database of correspondences between the AI Act and GDPR

- AI Compliance Tools — tools for AI compliance

- Self-Assessment Tool — self-assessment of AI Act obligations

- NicFab Newsletter — weekly update on privacy, data protection, AI, and cybersecurity (every Tuesday)

Further reading

- AI Act: deployers, AI agents and transparency — the deployer perspective and operational framework

- Code of Practice Art. 50: analysis of the first draft

- Digital Omnibus on AI: Parliament rewrites the Commission’s rules

- AI Act and EU digital legislation: complexity and overlaps

Concluding note

Regulatory precision is not an academic luxury — it is a professional responsibility. The question is not whether an organization calls itself a deployer, but whether its actual conduct — its modifications, its branding, its use of the system — places it in the position of a provider under the AI Act. For many organizations currently integrating AI agents into their products and services, the answer may be less comfortable than expected.

This post is part of the series dedicated to the implementation of the AI Act. For real-time updates, follow us on the blog and subscribe to the NicFab Newsletter.